Hello all of you amazing Lunduke Journal subscribers!

With March now behind us, I wanted to give you crazy kids a quick “behind the scenes” look at the stats for The Lunduke Journal. Because Inside Baseball stuff is fun.

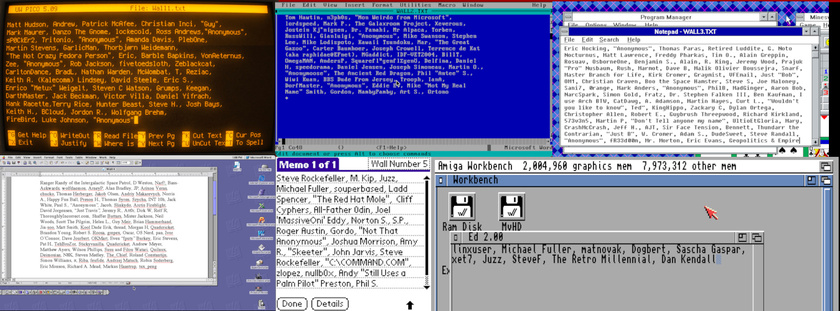

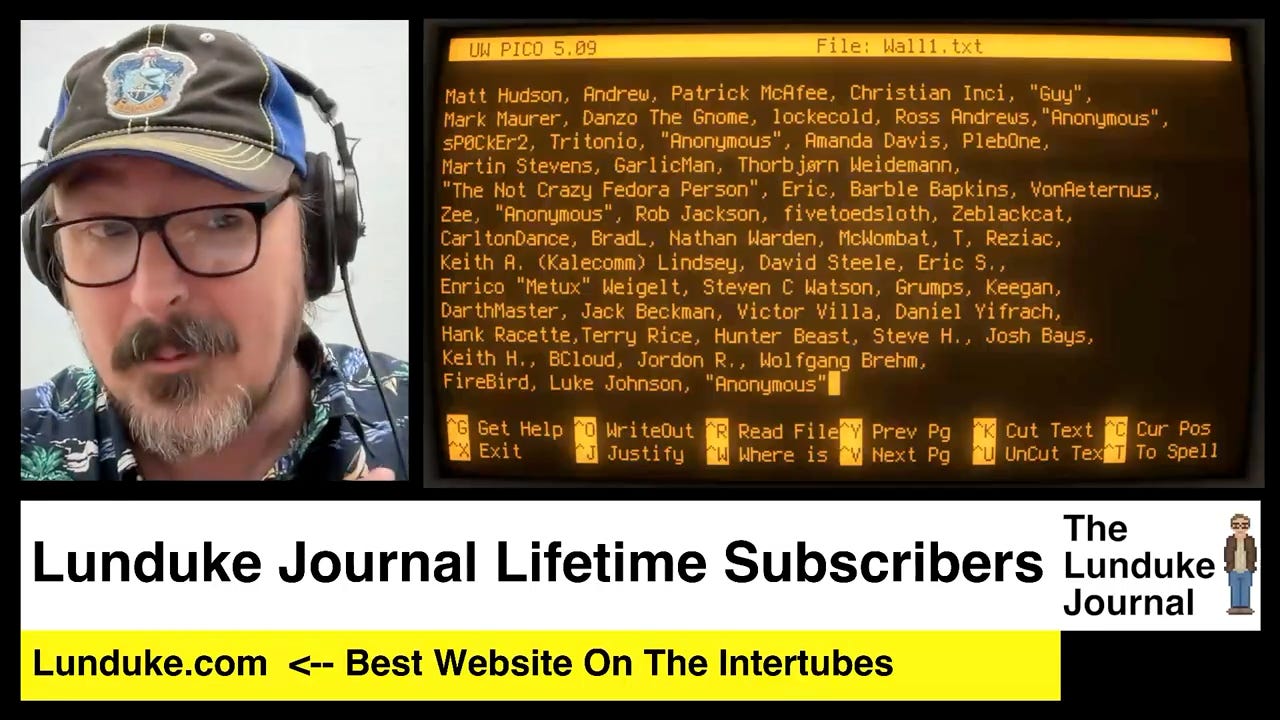

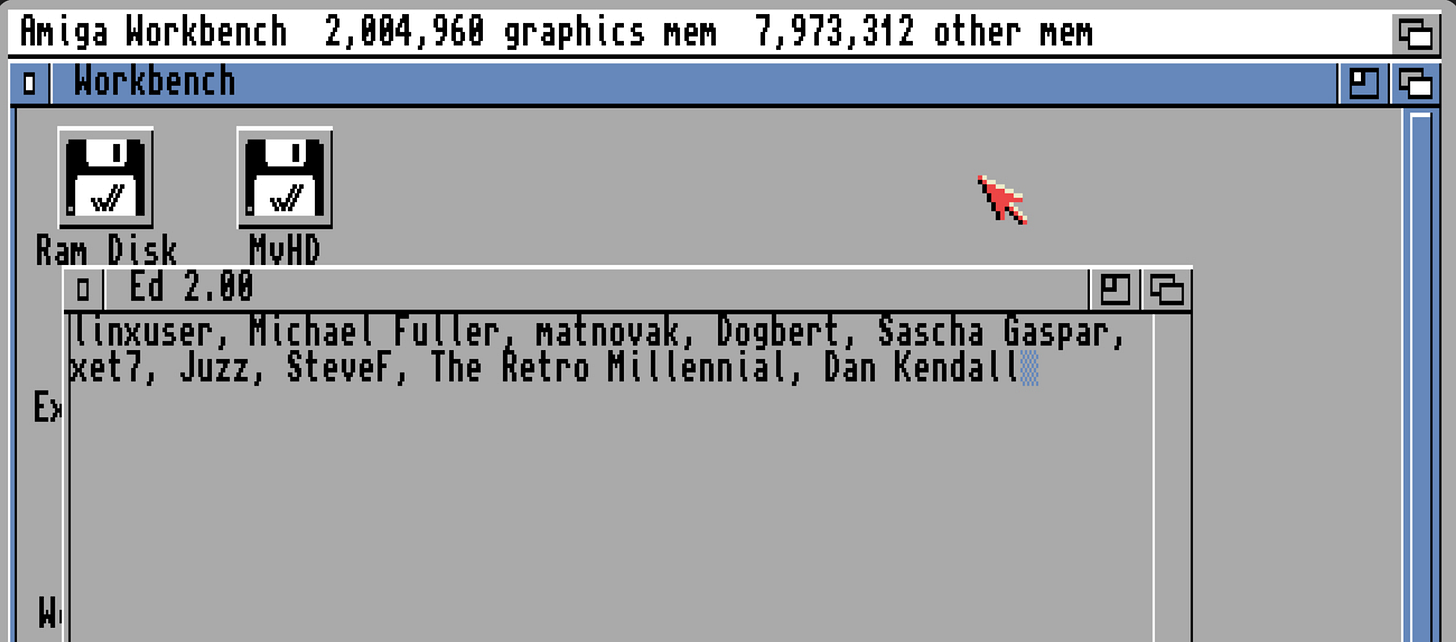

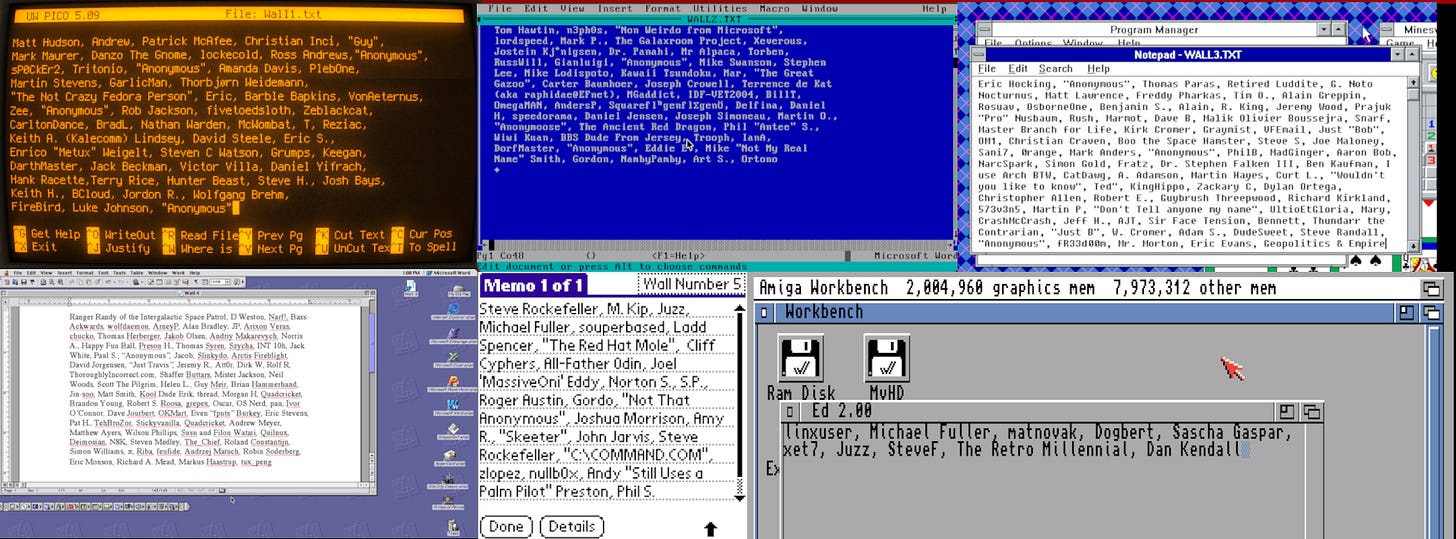

The Amiga Wall!

But before we dive into charts and numbers… behold! The brand new 6th Lifetime Subscriber Wall of Shame Awesomeness! The AmigaOS 3.1 Wall!

Every Lifetime Subscriber Wall (which I show at the end of each video) is a real screenshot from a different computing platform. Mostly retro. All awesome.

If you’d like to see your name listed on the new AmigaOS 3.1 wall, grab a Lifetime Subscription (if you don’t already have one) and toss me an email. I update the walls about once each week with new names.

The last few Lifetime Walls filled up incredibly quickly. So if the Amiga Wall interests you, I wouldn’t wait too long. Hint, hint.

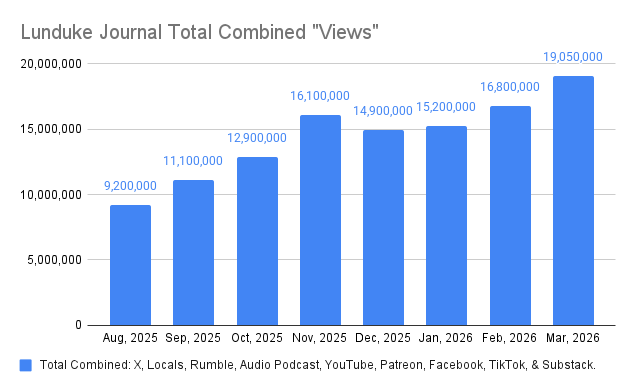

March 2026 Stats

The big news: Total “views” were way, way up in March.

A fair bit beyond what was anticipated. A hair over 19 million during the month.

That’s in total, across all platforms. As usual, the audio podcast and X lead the way in terms of total views/listens for shows (by quite a lot).

Interestingly, we saw significant “views” growth on even the smallest platforms in March (Facebook and TikTok).

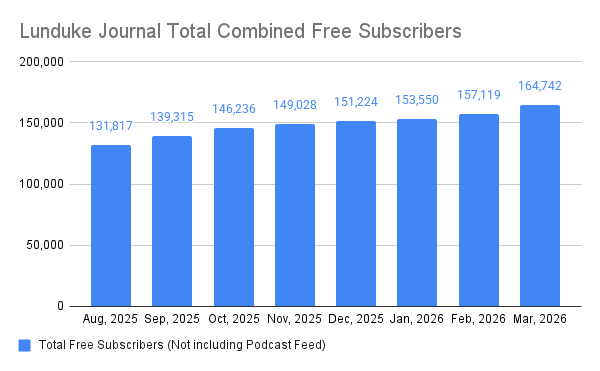

Free subscribers also took a major jump in March, with the largest one month gains ever (I’m pretty sure, certainly the largest this year or last). Up 7,623 over the month before.

Again, new subscribers grew across the board. The biggest gains were seen on X, but all platforms saw a significant bump.

Hard to complain about that!

The top 3 shows for March were all focused on the Age Verification laws:

Arch Linux Says Opposing Age Verification is Code of Conduct Violation

Omarchy Linux Rejects "Retarded" California Age Verification Law

While those were the top 3… it’s worth noting that the top 10 (and, really, the top 15 or so) shows for the month were all incredibly close in terms of viewership numbers.

As always, a huge thank you to all of The Lunduke Journal subscribers. You make all of this possible.

-Lunduke