Was I walking the dog or doing a comprehensive code review? Both

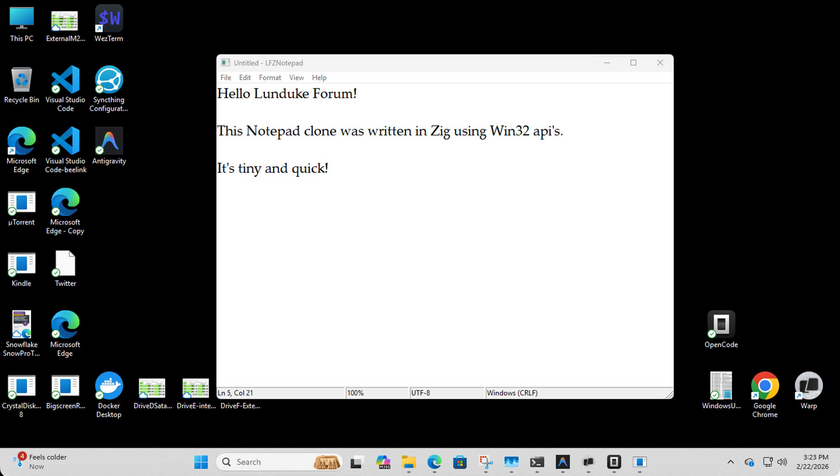

This weekend's Notepad projects were hobby level. The end result was working apps. That is not "done done" from a professional pov.

What about reviewing the code? What about the fact that Lee purposefully picked coding languages he didn't know Jack Spit about? Lee CAN NOT contribute to reviewing the code. Therefore this has been a useless exercise.

Not so much, though we will hit the truth in the above at the end.

I can’t review the code in a meaningful way, but I CAN direct an AI to do so. Saying “review the code” is NOT going to produce optimal result. I used ChatGPT to write a code review prompt on

1. Security & Safety

2. Correctness & Reliability

3. Architecture & Maintainability

4. Developer Experience

I have many more types of review built into my AgentFlow methodology. Why not? The AI is tireless.

I then used Codex web - which is connected to my github - and gave it the prompt. I did the same for the Zig and Forth versions Notepad - and then went and walked the dog. When I got back the reviews were done.

The review prompt: https://github.com/leebase/fnotepad/blob/master/docs/code-reviews/code-review-prompt.md

The Overview review: https://github.com/leebase/fnotepad/blob/master/docs/code-reviews/overview-code-review-022326.md

The review: https://github.com/leebase/fnotepad/blob/master/docs/code-reviews/code-review-022326.md

But Lee - what about the AI missing stuff? This is automated baseline. It found genuine issues that I likely wouldn’t have found even if I new the languages. I can feed that back into the AI to fix. Rerun the review until the AI can’t find any more issues.

Imagine - imagine if everyone on your team had this ability to comprehensively review their own code such that they get everything the AI can discover fixed. THEN - you move on to human code review?

Here is a taste of the output from the Forth code review:

Highest-Leverage Issues to Fix First

- 🔴 High Risk — Terminal raw mode restoration is not fail-safe; exceptions (

throw) can bypass cleanup and leave the terminal broken.

- 🔴 High Risk — Long filenames are silently truncated to 256 bytes and reused for the write path, which can save to an unintended file.

- 🟠 Medium Risk — Opening files larger than

MAX-BUFFER truncates content with no user-visible warning.

- 🟠 Medium Risk — Insert/paste overflow is silently dropped when the gap is full, causing implicit data-loss behavior.

- 🟡 Medium Maintainability —

run-editor input dispatch is a deep dup ... if ... then chain that is hard to extend safely.

- 🟡 Low/Medium Safety + DX — Helper scripts write to absolute local paths (

/home/lee/...), which is risky and non-portable.

- 🟡 Low Consistency — README claims “Zero Dependencies,” but implementation shells out to

stty via system; docs and runtime assumptions are misaligned.

Back to “the truth” in the criticism. There are domains where even the above process is not good enough. Lee is NOT equipped to judge the quality of this code. That is the truth. AI can indeed allow someone to create artifacts in domains they are not expert in. But without being an expert yourself, you can’t validate the work.

But you can HIRE a real human to do the validation. You can get a lot done, and then hire out what remains. And while I’m not a Forth expert of any kind - I am a legit principal level solutions architect.